Holy Shit, It's Over

I for one welcome our new PM overlords

Programming (as in writing code for a living) as a career is dead.

RIP Programming, 1955 - 2025. All good things must come to an end.

I’ve been at my new job for close to two months now. Many of the product managers (PMs) I work with are leaning heavily into AI tooling, specifically agentic coding. I’ve had a PM show me a “demo” of an idea they had, except the demo was a fully functioning prototype with code written by Claude Code.

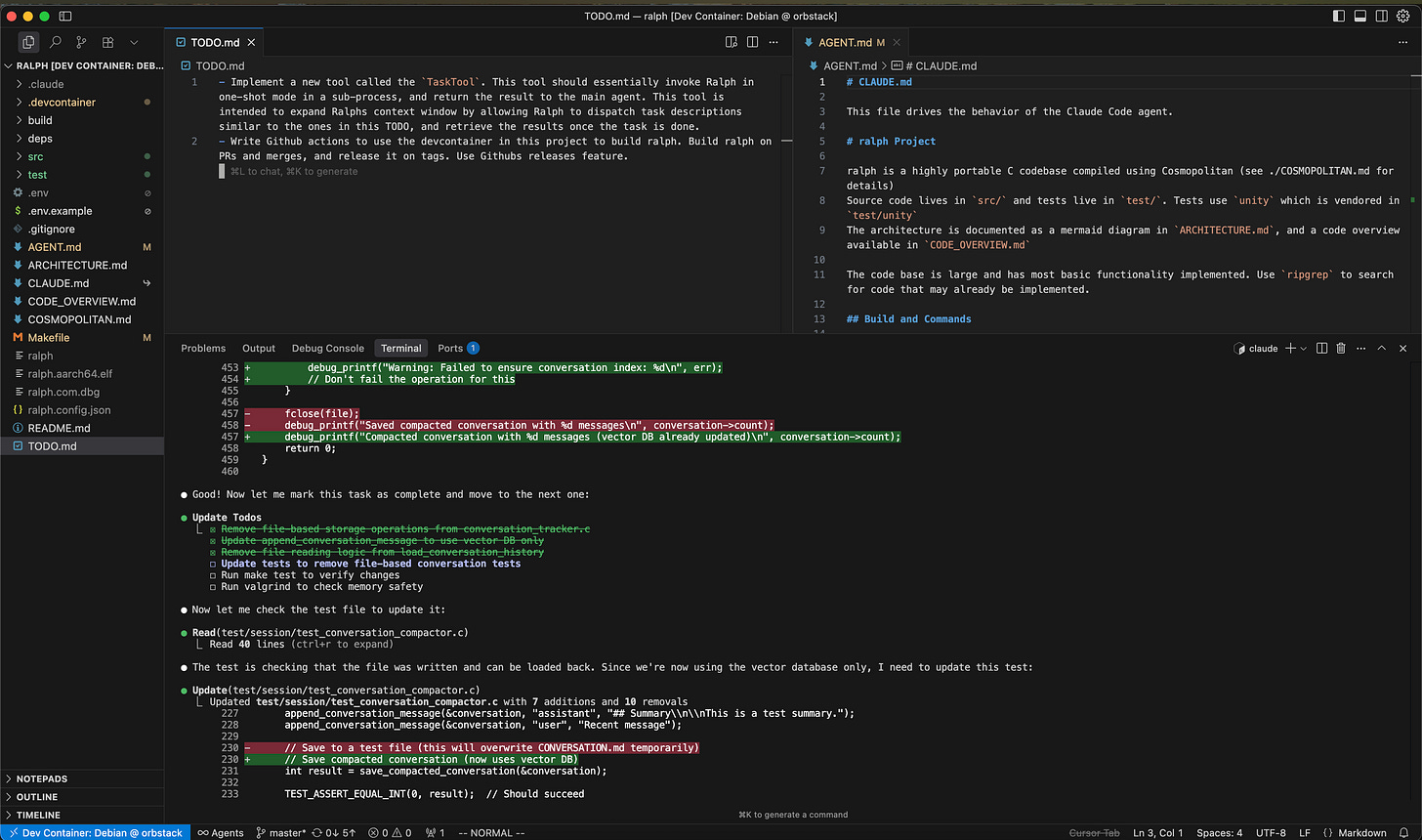

Claude Code is impressive, if expensive. As I write this, Claude has been working on a task for a codebase it built for about 10 minutes now. Autonomously. I’ve been working with Claude in much the same way as I would work with a junior/intermediate programmer. Much in the same way as I imagine the PMs have been interacting with Claude, except with maybe more persnickety steering on my part.

The codebase Claude has been working on is called ralph and it’s based on the following blog post:

Co-workers and peers are probably sick of me spamming that link to them. I can’t articulate why, but this article feels like it’s showing us a paradigm shift in software development in 2025. I’ve experimented with the techniques outlined in this article over 3 codebase implementations, learning more and refining my techniques as I go. The final iteration that Claude Code is working on now is my most successful attempt thus far.

The first ralph was written in Python. I wrote a main software specification, then worked with Cursor’s agent pre-filled with .cursorrules for Python programming to flesh the specification out. I then wrote a prompt for it to read the specs, write a task list and start working the task list. I set up the project to use ruff and pytest as back-pressure to the AI. It… worked. It managed to build itself an OpenAI compatible client and construct a loop of gathering input, sending the message to the AI with a prompt, and returning the result.

At some point I looked at the code it was generating and was horrified. Even though the software ran and was working, it was inefficient and full of duplicated code, placeholders and TODO comments, tests were written so that they “passed” but didn’t actually test anything, which hid the unimplemented features from me. I argued with Cursor for a while to fix it, but determined the whole thing was a loss and that I was going to try again.

The second ralph was written in Ruby. An odd choice, I understand, but I work in a Ruby shop and I wanted to be able to show my co-workers how all this works if I was successful. This whole paradigm is powered by an HTTP POST - there’s no programming language that I work in where you can’t perform an HTTP POST. It theoretically could be implemented in any language, and I wanted to test that.

This ralph was implemented with Claude Code. I had seen the amazing prototypes my PM co-workers were building and I wanted to give it a spin. I repeated much of the same procedure above, except I hand-wrote more code and refactored Claude’s work to nudge Claude in the right direction. I got further on this version, to a point that I was able to test out the recursive nature of the ralph idea - spinning out sub-agents to perform sub-tasks and using the main agent as a co-ordinator. I managed to spend $20 on the Anthropic API in 5 minutes and not really have any usable work to show for it.

I also eventually abandoned this ralph. Claude had built an agent that conformed to the specs, but when I updated or added to the specs, Claude had a hell of a time with the code it had written. It didn’t design anything to be easily extensible, and I found that it largely coded itself into multiple corners that it would have to perform a re-write to get out of. I also found that the rspec and standardrb back-pressure was too weak, something I found with the first Python ralph (using ruff and pytest) but didn’t pay too much mind to.

The third, least long lived ralph was going to be in Clojure. Given I believe any language can be a ralph, I also found I couldn’t stop thinking about the refrain “Data is code, code is data” within the Lisp community, and it’s implications toward a self improving agent. I’ve worked in Clojure a long time ago and remember thoroughly enjoying it, and so thought this would be an ideal platform to try to build a ralph.

This ralph didn’t even make it to the OpenAI loop stage that starts every ralph implementation I’ve tried. I had a brief discussion with a co-worker about LLMs and their inability to code Clojure, and quickly changed my mind. I also found setting up a back-pressure capable Clojure dev environment enough of a bear to consider it a non-starter when it came to runtime requirements.

The latest ralph, the current and most successful ralph, I decided to implement in C, and then decided to throw Cosmopolitan into the mix for extra spiciness. I love the Cosmopolitan project, and I’ve tried to leverage it in ambitious projects before with mixed success (I damn near almost got Python 3.11 compiled statically by Cosmopolitan, but alas OpenSSL/pip issues defeated me). I figured the LLM would have a lot of knowledge of programming C and I planned to rely on the fact that Cosmopolitan is a drop in replacement for gcc and friends. C is also statically typed and compiled. That’s a lot of back-pressure to the ralph loop, and with automated unit tests thrown into the mix, ralph gets a lot of feedback automatically when it makes mistakes.

This one I did with Claude Code, a CLAUDE.md that started empty and a devcontainer running a custom Docker image I whipped up, which was basically Debian running a Cosmopolitan toolchain pre-configured in paths and environment variables.

In previous ralph attempts, I tried to dogmatically follow the paradigm by writing specifications for the application, iterating on the specifications with whichever AI agent I was working with, and then when I felt the specifications were correct, I’d ask it to start implementing them. This time, I took a more iterative approach with Claude Code - we started small. I want a basic HTTP client. I want to be able to make a POST request to an arbitrary URL. I want to be able to create this structure in JSON. Slowly we iterated and built, and when Claude did something dumb, I adjusted the CLAUDE.md file.

Claude Code doesn’t know how to do test-driven-development (TDD), even when you tell it to. Apparently the gestalt of the internet didn’t understand you need to start by writing a failing test. I had to devote a few lines to the CLAUDE.md to explain the red/green TDD loop. I learned early to tell Claude to search for functionality before it started to implement something. As the Ralph article states, “It has defects, but these are identifiable and resolvable through various styles of prompts.”

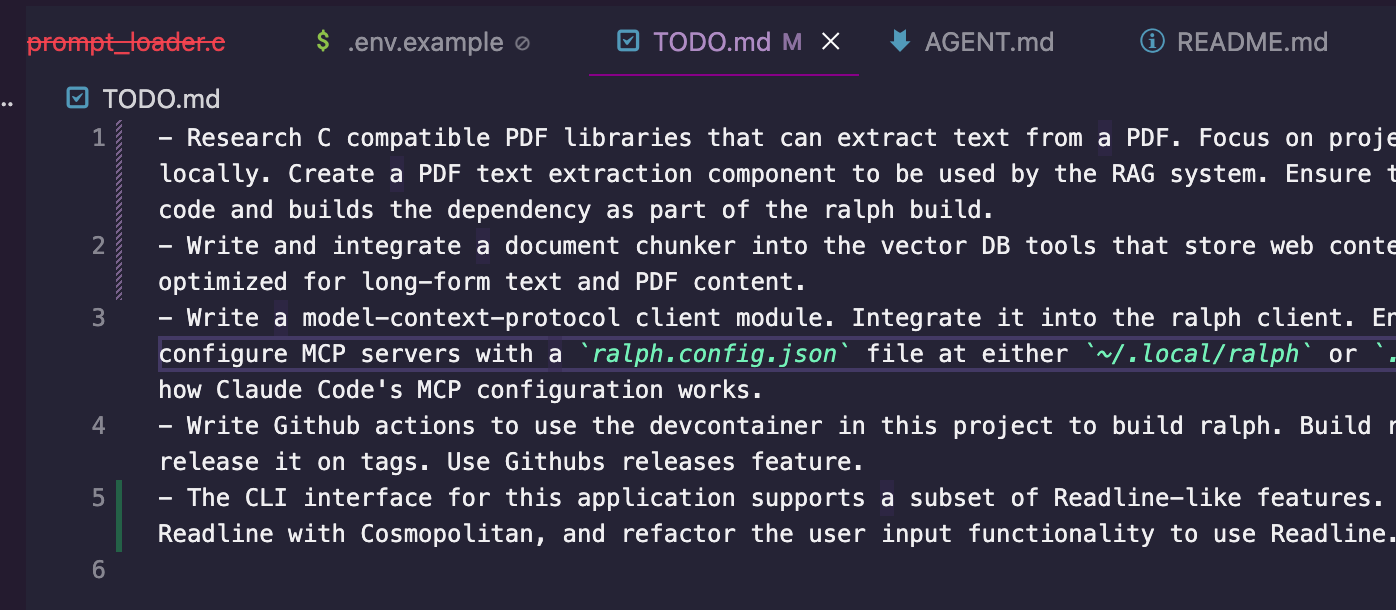

I’ve been working on ralph consistently for about 6 days now. In that 6 days, I’ve gone from a blank slate to a functioning portable AI agent that supports OpenAI, Anthropic and local LM models, has a suite of tools to aid in software development including an internet browser for research (as opposed to curl), a configurable MCP server, a built in vector DB, RAG, and is capable of performing long running complex tasks autonomously.

Basically ralph is Claude Code.

I mean, it’s neat and all, but what’s the point of building a Claude Code clone when:

so many others are also cloning Claude Code

there are better, more reliable AI coding tools like aider

Claude Code exists (I mean, I wrote it with Claude Code)

This goes back to the death of programming.

The days of a person opening a text editor to manually write code and compile it are gone. Some of us might not realize it yet, but it’s true. The programs of the next generation of computing will be written by AI. I’m very confident of this. My experiences writing ralph have convinced me - in less than a week I’ve rebuilt something that took me 9 months to build in Python from scratch a year ago, and it also solves a fundamental problem I was working on around multi-architecture support and getting my binaries running on other people’s machines.

That’s insane. That’s an industry changing paradigm shift.

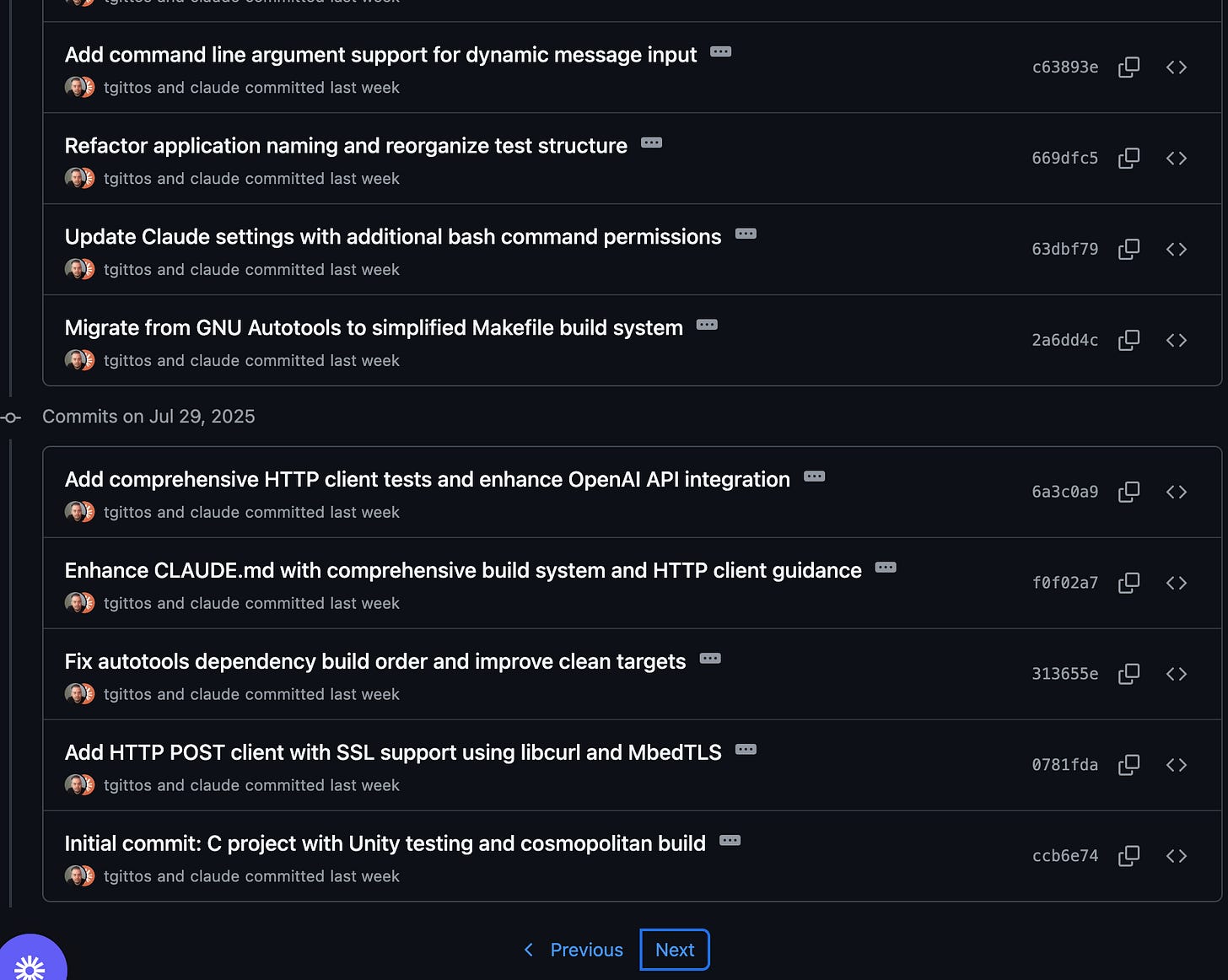

As of right now, this is what my development on ralph looks like:

I write a line in a

TODO.mdfile

I boot up Claude Code, and say “Read the

TODO.md, and work on the item at the top of the list. Remove it and commit your work when you complete the task.”Claude Code writes all the code, tests, updates the Makefile, checks the final C program with

valgrind, then commits the resultClear Claude Code’s context with a

/clear, and repeat from step 2

I don’t write any code. None. I haven’t looked at the content of any of the source files outside of a handful of manual tweaks to ralph’s core prompt. Claude Code frequently forgets directives in your CLAUDE.md file, especially as it’s context window fills up and you near compaction. Sometimes Claude makes non-optimal design decisions - if I can catch it in the act, I can stop it working and guide it to a better design. Most of the time I only catch it after the fact.

It’s not been all roses and sunshine. I’ve had to baby sit Claude Code through at least two significant refactors around conversation tracking and serialization, and how it manages the AI agent’s tools systems. I’ve had to tell Claude Code to re-write the mess of a Makefile it created. Multiple times. At one point, it’s code organization was so haphazard I noticed it was using ~20k tokens just to find code - I had Claude Code perform a re-org of the codebase to make things more logically laid out to aid in it’s searching.

At first, these experiences are frustrating and may lead you to think that maybe LLMs aren’t so smart after all, and none of our jobs are actually at risk and there will be a snap back in the future. Then you sigh, instruct the LLM to analyze the problem, write it’s findings into a TODO.md list item, /clear Claude Code and ask it to fix it. And it does.

Then you realize this is just how you fix bugs now. This is how you realize your code needs to be re-organized. This is how you find leaky designs and plug them. The agent will start to struggle and seem to get dumber, and that exposes a problem at large with it’s code. Then you get the agent to fix it.

I manually verify Claude Code’s work by running ralph and interacting with it. Whenever I notice something wrong, or something that I don’t like, I have a chat with Claude about it. Usually, I’ll tell Claude to just use ralph directly (who is configured to also talk to Claude Sonnet through the Anthropic API) and fix the thing I don’t like.

It’s… it’s weird. I’m not going to lie. To watch an AI test an AI and make adjustments autonomously is wild.

It should be obvious by now that the final goal is to get to a point where I can use ralph instead of Claude Code. Then ralph will be able to work on ralph at the guidance of an experienced software developer, and together they’ll both be able to do things that neither could have done on their own. Eventually I foresee being able to work on ralph by adding items to TODO.md, as ralph studiously works on through the list simultaneously.

Software will no longer be “written”, rather it will be “grown”.

I’ve always referred to programming being more akin to gardening than engineering. We pretend we’re performing engineering by performing iterative design, iterative development, SCRUM, requirements gathering, product architecture briefs, etc. But the systems grow organically as the needs of the problem or organization change. Where you end up might not look anything like where you started, even with all of your engineering efforts.

Now, we can quite literally “grow” code from a seed. The seed is ralph, Claude Code, aider and other programming AI agents like them. The specific seed used isn’t as important as the ability to grow it, although it will have some impact. “Programming” skills in this software “growth” world will be the ability to articulate requirements, use tools like AGENT.md to steer the agent, recognize meta-problems with the codebase that make the AI less effective, and basically guide and shape the software through the AI rather than directly with code.

My seed is ralph. I built it in Cosmopolitan C because I want it to be a universal seed that I can use on any system with any programming language and start to build software. I highly recommend anyone who wishes to make it through this great upheaval to build a ralph. In this world where product managers and clever lay people can use an AI agent to build workable software, the in-demand skills are going to be the people who can build these AI agents.

Obviously, the further up the chain you go, the safer you are. Training foundational LLM models is literally worth millions per year in salary right now, if you can get in. Being able to build AI based tooling that other people can use to build software is going to be a good runner-up option. Learning the agentic loop, learning how to steer LLMs, learning how to manage context window sizes, learning back-pressure techniques, are the new table stakes for programming now.

Even if you don’t build a ralph yourself, you should build something using some kind of programming agentic AI and never look at the source code. The whole process is eye opening and kind of amazing. I’ve met programmers (and I started this way too) who read LLM written code and thought “Wow, this is trash. I’m safe - AI can’t write the kind of code that I can”, and those people don’t get it. It’s not about code quality - honestly, it never was. It’s about creating software that people find useful. As long as the thing does what the user wants it to do, who cares what the code looks like?

I built my seed, ralph, using agentic AI coding techniques in a language I’m barely capable of writing, using a cutting edge compiler with a very small community footprint in 7 days.

Now, these systems aren’t cheap. I both use a Claude Code Pro subscription ($200.00/mo) and pay for API usage a-la-carte (I have reasons, I also have an API key for OpenAI). I get pings about 1-2 times a week for $20 charges on the API. I’m paying a lot of money to use these AI’s to do my job for me.

It only took me 7 days because I was extra persnickety about how the LLM built the code, because I’m paying for it myself. I don’t want it to spin around wasting tokens looking for code because it’s poorly designed and organized. I’m a programmer, and I’m doing this mostly to build new skills, so I’m price sensitive. If I didn’t care how much money it cost to do this (say, because I couldn’t do it myself), I probably could have got the job done in 4 days.

That accountant who’s struggling with a manual process of copying data out of one program into another - who uses Claude Code to build a tool in a day to automate their work and save 8 hours per week - will just expense it. Even if it cost thousands of dollars to build. The company will gladly pay, and if the accountant built an impressive enough tool, they will fire the accountant and replace them with their tool.

Programmers are not needed anymore.

Custom software development is a commodity now.

Seven days to build a complex autonomous agentic AI coding agent that runs on every platform as a single binary. Seven days to re-write a Python codebase in a new language, a codebase that took 9 months to build by hand from scratch, and the new code is faster and truly portable vs. the self expanding nuitka based (great project) monstrosity I built.

In fact, I don’t just recommend programmers build something with an AI agent, I recommend they rebuild something they’ve already built. In a language totally unfamiliar to them. Then re-evaluate how safe you feel in your job.